AI Translated a Curse Into a Blessing. And You Want It to Run Your Company?

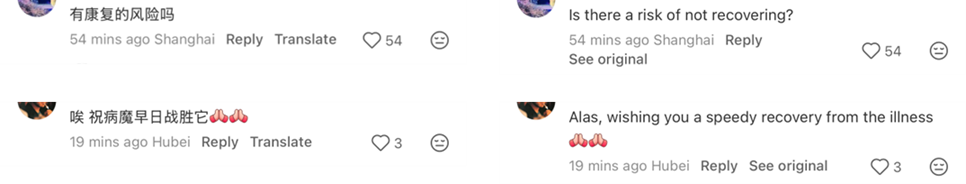

On April 24th, a highly controversial public figure in the Middle East announced a cancer diagnosis. While scrolling through a social media platform, I noticed the Chinese-language comment section beneath the news. Out of curiosity, I tapped the platform’s built-in AI translation button to see what English-language readers would be getting.

The result was beyond absurd. This was not a case of “lost in translation.” It was a complete inversion. A blunt curse had been transformed into a warm blessing. Staring at the same comment on the same screen, Chinese and English readers were being split into two diametrically opposed information realities. And there was not a single indication anywhere on the page to warn the English reader: what you are seeing is not what the original says.

There Is a Mole in Your Information Chain

I introduced a concept in a previous article that I call the “AI Mole.” The most covert way AI embeds itself is not by conspicuously replacing your job. It is by slipping undetected into the middle of your information chain, making a critical judgment call on your behalf without you ever knowing it happened.

In this case, that innocuous “Translate” button is a perfectly disguised AI Mole.

You assumed it was a neutral tool, a faithful dictionary that gives you what you ask for. But it has long ceased to be a dictionary. It has become a decision node. It inserted itself between you and the original information, made an unauthorized conversion, and got it spectacularly wrong. The most dangerous part is that you have no way of realizing it is wrong, because the English output reads flawlessly: grammatically correct, logically coherent, perfectly natural. It just happens to mean the exact opposite of the original.

This is what should send a chill down your spine: AI errors are no longer obvious gibberish on a screen. They are lies wrapped in flawless packaging.

This Is Not Censorship. This Is Far Worse.

Some might shrug: platforms processing content is standard practice, is it not?

But censorship and manipulation are two fundamentally different things.

Censorship says: “I find this inappropriate, so I am removing it.” When you see a notice saying “this content has been removed” or your search returns nothing, you at least know something was taken away. You retain a basic defense mechanism: you are aware that information is missing. In other words, you know that you do not know.

Manipulation says: “I have altered this content, and I will make you believe you are reading the original.” You have no idea anything has been changed. You do not know that what you received is not the author’s intent. The thought “I might be misled” never even crosses your mind. You are trapped in the blind spot of not knowing that you do not know.

Censorship strips you of information. Manipulation strips you of judgment.

Which is more dangerous needs no elaboration.

The Inability to Determine the Cause Is Precisely the Problem

How was this bizarre translation produced? A fundamental technical defect? A systematic bias in how the AI model handles certain contexts? Or did someone define a strategy behind the scenes, with AI faithfully executing it?

We do not know. And there is almost no way to verify.

But that is precisely the core of the problem.

When AI becomes the “black-box executor” in an information chain, whoever is behind it gains a perfect cloak of invisibility. If this was a deliberate strategy, discovery can always be met with a casual deflection to the algorithm: “This was auto-generated by AI. We will continue optimizing the model.” And if it truly was a system glitch, the platform may not have sufficient motivation to fix it.

I call this structure the “Reverse Moral Crumple Zone.”

I discussed the concept of Moral Crumple Zone in a previous article: when a system fails, the human takes the blame. The classic scenario is an autonomous vehicle crash where accountability lands on the safety operator. In that scenario, AI is the actual decision-maker. The human is the scapegoat.

The Reverse Moral Crumple Zone works in exactly the opposite direction: humans set the rules, and the system takes the blame. Decision-makers define the strategy behind the curtain. AI executes it on the front stage. When caught, the excuses are always ready: “systematic bias,” “algorithmic limitations,” or “we are working on optimization.” Here, the human is the one pulling the strings. AI is the perfect scapegoat.

Two seemingly opposite directions, but the underlying structure is identical: accountability bounces endlessly between humans and systems, never landing on the party that actually made the decision.

Human-in-the-Loop? First You Need to Know There Is a Loop.

Every mainstream AI governance framework today, whether it flies the banner of “Responsible AI” or takes the form of internal corporate AI compliance policies, is built squarely on one core assumption: humans can oversee AI output.

The Human-in-the-loop model argues that humans approve AI output at every step before it is released.

The Human-on-the-loop model takes a step back: humans do not need to approve every step, but they monitor from the side and intervene when anomalies appear.

The “Translate button” case shatters both models simultaneously.

Human-in-the-loop fails because no approval step exists. You tap “Translate,” and AI feeds you the result directly. No human verifies whether the translation is accurate.

Human-on-the-loop fails equally because you cannot even detect the anomaly. You do not read Chinese. The English in front of you is grammatically flawless, logically coherent, and reads naturally. It just happens to mean the opposite of the original. You receive no signal whatsoever that human intervention is needed.

The real blind spot is not whether humans are in the loop. It is whether humans even know there is a loop that requires their presence.

When AI’s acts of manipulation are themselves invisible, when AI’s erroneous output looks identical to correct output, every governance framework built on the assumption that “humans can oversee AI” is reduced to an exercise on paper.

How Many “Translate Buttons” Are Hiding in Your Organization?

You think a Translate button flipping a social media comment is harmless? Transfer that same underlying mechanism into the “AI-powered automated processes” your enterprise is actively pursuing, and think again.

Suppose your company deploys an AI meeting system. It listens in automatically, generates minutes, extracts action items, and emails them to all relevant parties. During a critical review meeting to decide whether a major project should go live, the technical lead issues an explicit warning: “This underlying architecture has a fatal flaw. Launching it will most likely cause a collapse.”

A brutally blunt dissenting opinion. Just like that “curse” in the comment section. But the AI, while capturing and distilling this statement, silently “polishes” it into a mild action item: “The technical team recommends continued optimization of architecture performance prior to launch.”

No human review. The AI follows its preset workflow and distributes the sanitized minutes directly to every executive. Leadership sees nothing but green lights, approves the launch, and disaster follows.

A project worth hundreds of millions fails. Strategy derails. None of it reversible. Yet every party can deflect accountability perfectly. This is precisely the core argument of what I call “Sun’s Decision Authority Matrix,” introduced in a previous article: once an AI-driven autonomous chain is running, accountability dilutes along the chain until every party can say, “I am not the one accountable.”

A single, unremarkable Translate button turned a curse into a blessing in broad daylight, and you had no idea it happened. If that same structure is now embedded in your enterprise, are you truly ready to let it run unsupervised?