AI Runs Entire Kill Chains in War. In Business, It Can't Even Run A Supply Chain.

Sun’s Decision Authority Matrix

A hotel’s AI room-assignment system upgrades the incoming Mr. Sun to an Executive Suite on the 12th floor. The manager takes one look and overrides the decision: make it the Presidential Suite. Cost: virtually zero. Time: three seconds. The system ran a stack of variables internally—Ambassador-tier membership, 28,000 USD in annual spend, first stay at this property—and produced a recommendation. The manager doesn’t need to inspect the model’s feature weights. He only needs to judge the output: is Mr. Sun worth a better room? If yes, upgrade manually.

That is the entire world that current AI governance was built for.

Override. Audit. Transparency. The vocabulary sounds robust. It rests on one premise: AI is an individual employee. Every decision it makes can be reviewed and corrected in time. The conclusion is comprehensible, reversible, and low-cost. Human judgment applied to the final output—that’s enough.

Consider the numbers that sound so intimidating today: IDeaS makes 100 million pricing decisions daily across 30,000 hotels; Amazon auto-adjusts prices 2.5 million times a day; Upstart processes 92% of its loans with zero human involvement; Google Performance Max manages budgets for over a million advertisers. Every one of these is fundamentally the same thing: humans set boundaries, AI executes within them, and a manager can pull the plug at any time. A hundred million decisions—they are still point decisions. Each one operates within a single domain, with clear boundaries, and Override is always available.

Now watch the premise collapse.

An AI system predicts that sales in the Eastern China region will decline 15% next quarter. The procurement system automatically cuts raw material orders by 20%; The reduced order volume triggers a minimum-purchase clause in supplier contracts. The system calculates that the penalty for breach is lower than the risk of excess inventory, and accepts the breach; Logistics automatically releases two pre-booked warehouse slots; The finance system recalculates cash flow and adjusts the payment schedule for accounts payable.

Three months later, sales didn’t decline. They rose. But raw materials are short, warehouse slots are gone, the supplier has collected penalties and is demanding a price increase, and the financial model has triggered a cascade of downstream adjustments. The result is not just a stockout: the contract breach has triggered a floor clause in a long-term agreement with a major shipping line, automatically raising all freight rates by 15% for the second half of the year.

Now who performs the Override? Override which step?

Reverse the sales forecast? Every downstream link has to be rebuilt: re-sign procurement contracts, re-lease warehouse space, claw back supplier penalties, reconstruct the financial model. In a point decision, Override means “change one room.” In an autonomous chain, Override means “dismantle a cross-functional chain that is already running, then rebuild from scratch.” The difference is not one of degree. It is one of kind. The cost is so high that no rational manager would press that button.

You tell me Audit? Audit what? On this chain, every intermediate node’s output is simultaneously the next node’s input. The sales forecast feeds procurement; procurement volume feeds logistics; logistics arrangements feed finance. The problem is not in any single node—it is in the transmission logic between nodes. Existing audit frameworks are designed to check whether one decision is correct. They are structurally incapable of checking whether the internal logic of an entire decision chain is coherent. The technology is not a complete void: process mining and digital twins are active fields. But at the organizational level, no company has established an executable chain-level audit loop.

You tell me Accountability? In the old world, someone signed off, and accountability automatically attached to the signatory. A meeting, an approval action—these forced together “where did this number come from,” “what was done based on it,” and “what irreversible consequences were committed.” AI eliminated the act of signing, but no one designed a replacement. The sales forecast on this autonomous chain is not “a decision that was approved”—it is “a state that was computed and propagated.”

No one signed off, so accountability has nowhere to land.

The data scientist says: “I just set the parameters.” The CTO says: “I just approved the system going live.” The business VP says: “I never even saw that number.” Accountability dilutes along the chain until everyone can say “nothing to do with me.” The human forced into the “in the loop” position becomes a sponge that absorbs blame, not an agent who exercises decision authority. Academia calls this the “Moral Crumple Zone.”

When AI evolves from “individual employee” to “autonomous chain,” the existing governance vocabulary collapses entirely.

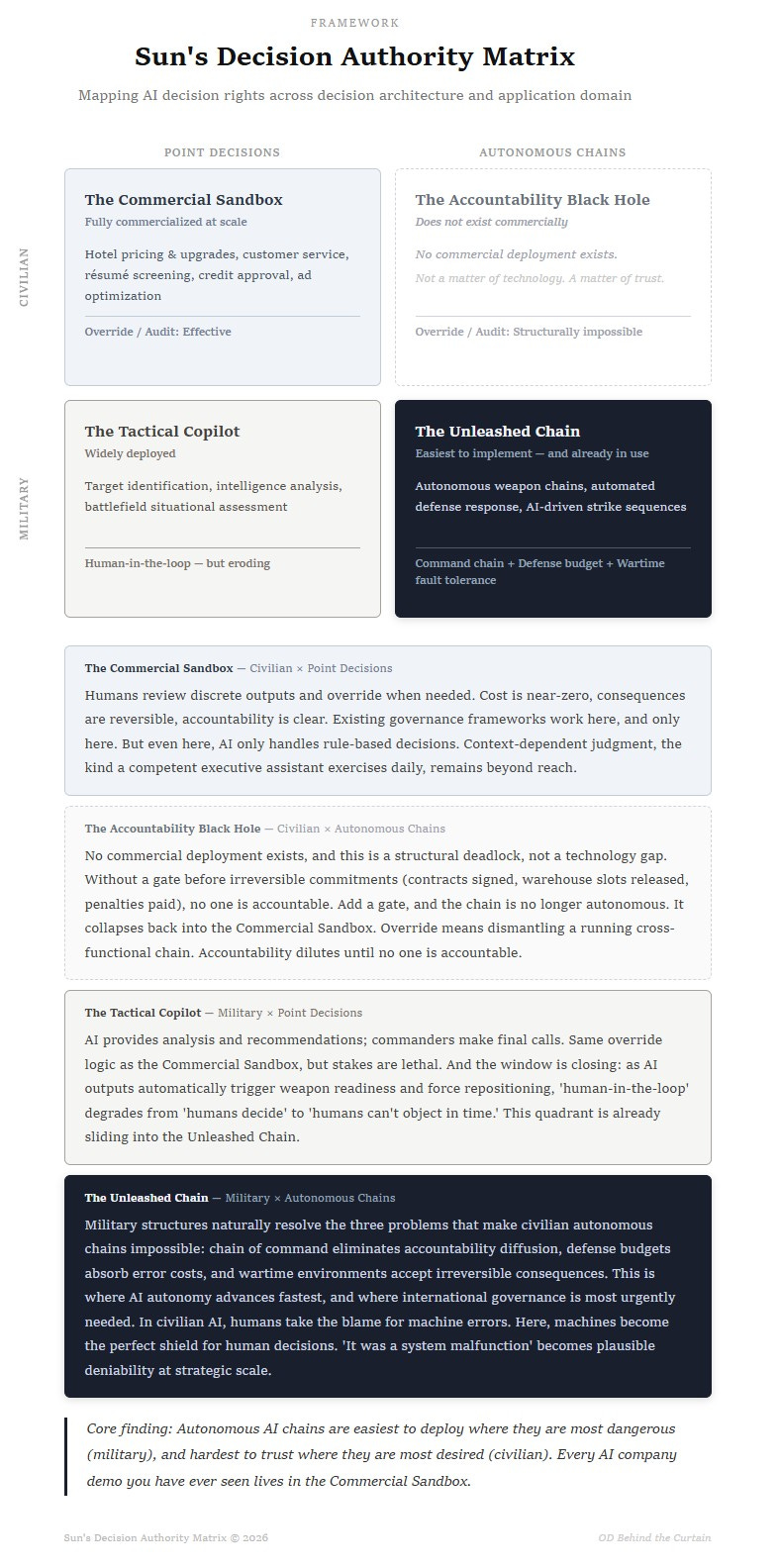

Sun’s Decision Authority Matrix

The allocation of any decision authority ultimately depends on three conditions:

Can the right to halt be exercised?

Is the cost of halting bearable?

Can accountability be attributed?

Existing AI governance frameworks can satisfy these three conditions, but only when AI is making point decisions. The moment AI evolves into an autonomous chain, existing frameworks are powerless against any of the three.

But distinguishing point decisions from autonomous chains is not enough. The same autonomous chain, placed in a commercial environment versus a military environment, yields completely different answers for halt rights, error tolerance, and accountability. The application domain must also be part of the analysis.

On this basis, I propose Sun’s Decision Authority Matrix. The horizontal axis is decision architecture (Point Decisions vs. Autonomous Chains). The vertical axis is application domain (Civilian vs. Military).

The Commercial Sandbox — Civilian × Point Decisions

Pricing, approvals, customer service, résumé screening. This is the only quadrant that is mature, heavily commercialized, and crowded. It is also the quadrant that virtually every AI company uses for marketing. They demonstrate AI’s value with the most harmless scenarios, making you feel AI is that tame. Existing regulatory frameworks are perfectly adequate here. This quadrant needs neither panic nor over-regulation.

But don’t overestimate AI’s capability boundary within this quadrant either. The successfully commercialized AI point decisions share a common trait: finite rulesets. No matter how many variables hotel pricing and room assignment involve, the end result is an optimization function.

But many seemingly simple tasks are not optimization functions. An executive assistant sends a meeting invitation. Someone replies they can’t attend. Does the meeting still happen? If A can’t make it, the meeting goes ahead. But if both A and B can’t make it, C must be present, because C is the only person who can represent A’s position. C has another meeting this afternoon, so this one needs to be rescheduled. When it gets rescheduled depends on the boss’s calendar tomorrow. Every step in this judgment chain lives outside any SOP. It exists in the assistant’s tacit understanding of organizational power structures, interpersonal dynamics, and the relative weight of agenda items. Current AI is more than capable of handling formulaic point decisions, but remains helpless with point decisions that require contextual judgment. Companies should be clear about which type of decision they are targeting before deploying AI.

The Accountability Black Hole — Civilian × Autonomous Chains

To get a cross-departmental AI autonomous chain running in a commercial environment, you need to answer three questions: Who can halt it? Can the cost of halting be absorbed? If something goes wrong, who is on the hook?

This quadrant is empty.

How do you determine whether an AI system is a point decision or an autonomous chain? Two criteria, both of which must be met:

System A’s output automatically becomes System B’s input with no human confirmation in between (cross-domain continuous triggering);

The chain contains irreversible cost lock-ins. Once triggered, the cost of rollback escalates steeply (contracts signed, warehouse slots released, penalties paid).

Cross-departmental, fully autonomous end-to-end processing does not exist in the civilian commercial sector today. Not “in development,” not “about to launch”—zero.

Pactum runs autonomous negotiations (Walmart uses it; 2,000 simultaneous supplier conversations, 68% close rate). RELEX runs autonomous replenishment (96% of replenishment decisions are untouched by humans). UPS ORION runs autonomous route optimization (55,000 trucks optimized daily, saving 100 million miles a year). Impressive as they sound, test them against the two criteria: Pactum’s negotiation outputs do not automatically trigger downstream procurement and logistics cascades. RELEX’s replenishment orders do not automatically sign shipping contracts and lock warehouse slots. ORION’s route optimization does not automatically rewrite financial budgets. Their outputs do not automatically cross nodes of irreversible commitment (contracts, slots, penalties), and rollback costs are low. In plain terms, they are Single-Function Autonomy—the most aggressive plays inside the Commercial Sandbox, not the Accountability Black Hole.

It is not a technology problem. It is a trust problem.

When a chain can automatically cross irreversible commitment nodes (signing contracts, releasing warehouse slots, paying penalties), someone must be accountable for “allowing it to cross.” But no one fills that role today. Without that person, there is no one to hold accountable when things go wrong.

Some might say: just add a control gate. Put a human approval step before every irreversible commitment node, and you get both automation and accountability. But this is precisely the problem. The moment you add a gate, the chain is no longer autonomous. It gets sliced into segments, each running point AI, with gates welding authority in place between them. That is not the Accountability Black Hole. That is a reassembled version of the Commercial Sandbox. So this quadrant faces a structural deadlock in the commercial world: without a gate, it cannot be held accountable; with a gate, it is no longer an autonomous chain.

Finance looks like the closest thing to this quadrant. In high-frequency trading, cascading reactions between algorithms genuinely exist: one algorithm’s sell signal triggers another’s hedge, which triggers a third’s stop-loss. But financial trading runs end-to-end inside electronic systems, and exchanges and clearing systems are natural gates: circuit breakers can freeze an entire chain in milliseconds, and every transaction can be monitored, traced, and even intercepted before settlement. In other words, the financial system uses infrastructure-level mandatory gates to slice a seemingly autonomous chain back into controllable segments. It is not an exception to the Accountability Black Hole. It is the best illustration of the rule that adding a gate collapses the chain back into the Commercial Sandbox.

Physical commerce is different. Contracts are signed with real people. Warehouse slots are locked with shipping lines. Penalties are paid in real money. Once these commitments are made, no system can reverse them with one click. That is why this quadrant will remain empty in civilian physical commerce.

The Tactical Copilot — Military × Point Decisions

AI-assisted target identification, intelligence analysis, battlefield situational assessment. The system recommends; the commander decides. Every major military power is deploying at scale. Humans remain in the loop.

The governance logic here is nearly identical to the Commercial Sandbox: the commander is the hotel manager. AI produces a conclusion; the human glances at it and overrides if needed. But the stakes are in a completely different league. The hotel manager gets it wrong, a guest complains. The commander gets it wrong, civilians die. The same Override mechanism, under different stakes, gives “human-in-the-loop” an entirely different meaning.

A reconnaissance drone over Syria identifies a suspected militant staging area. The operator sees the AI-generated target box on the screen. He has thirty seconds to authorize or deny the strike. This is the Tactical Copilot’s standard scenario: the machine sees, the human decides. But move the same drone to the electronic-warfare-saturated skies of eastern Ukraine, where the signal can cut out at any moment. Once the link is lost, that thirty-second decision window ceases to exist. The machine will not hover in place waiting for reconnection. It either crashes or switches to autonomous mode and continues the mission. Same drone, same algorithm—from the Tactical Copilot to the Unleashed Chain, separated by a single lost signal.

This is not an isolated case. “Human-in-the-loop” is degrading from “humans decide” to “humans can’t object in time.” Time compression is only the first dimension of the slide. The second is the automatic interlocking of decision chains: when AI’s situational assessment automatically triggers weapon systems into ready state, automatically adjusts force deployment plans, and automatically updates rules of engagement parameters, the commander is no longer facing a recommendation he can veto. He is facing a chain that is already running. Time compression means the human “can’t intervene in time.” Decision chain interlocking means the human “doesn’t know where to intervene.” Stack the two dimensions, and the Tactical Copilot slides into the Unleashed Chain.

The Unleashed Chain — Military × Autonomous Chains

This is the most uncomfortable quadrant in the entire matrix. The irony: it is the easiest to implement.

The military naturally resolves the three pain points that paralyze the commercial world:

Chain of Command perfectly eliminates accountability diffusion. Commanders bear accountability for all actions under their command, whether executed by humans or machines. This is not a system that needs to be redesigned. It has been running for centuries;

Defense budgets in the hundreds of billions can absorb extreme error costs. U.S. military AI spending was $9.2 billion in 2023 and is projected to reach $38.8 billion by 2028;

Wartime environments naturally accept irreversible consequences, as long as the mission is accomplished.

This quadrant is not only home to superpower competition. It is crowded with players seeking asymmetric advantage.

Israel’s AI systems generated and authorized, in weeks, strike targets that previously required human analysts years to confirm. On the Ukrainian battlefield, the drone you just saw in the Tactical Copilot—the one that lost its signal—is not a hypothetical. It happens every day, and it is evolving from single-unit autonomy to swarm autonomy. Turkey’s autonomous drone fleets have already changed the rules of regional warfare as off-the-shelf products.

Here, no one cares about explainability. When the choice is between activating autonomous strike and being killed, humans hand over the final firing authority to machines without hesitation.

But the real danger is not at the tactical level. Every case above involves autonomous decisions on a local battlefield: one target, one drone. Yet the moment tactical autonomy succeeds, it naturally climbs toward the strategic tier: from “autonomously identifying and striking one target” to “autonomously planning and executing a strike sequence,” then to “autonomously assessing the battlespace and adjusting the campaign plan.” Each level up means a longer chain, more irreversible consequences, and a narrower window for human intervention. This slide from tactical to strategic reveals the darkest reality of this quadrant: the reverse Moral Crumple Zone.

In civilian AI, humans are forced to take the blame for machine errors. In military autonomous chains, AI becomes the perfect shield for human decisions: Plausible Deniability. If a cross-border strike is triggered, the attacking party can attribute it to “a logic fault in the autonomous system.” This is the fundamental reason behind last year’s explicit consensus between the U.S. and China that “humans must retain ultimate control over nuclear weapons.” The great powers see the game for what it is: the door must be sealed shut. No party can be allowed to launch a nuclear strike under the cover of “system malfunction” and walk away from ultimate accountability.

Conclusion

Looking back across the four quadrants, what truly determines how far AI can go has never been technology. It is three organizational questions:

Who has the authority to halt?

Can the cost of halting be absorbed?

If something goes wrong, who is on the hook?

This matrix reveals a deeply unsettling conclusion: autonomous AI chains are easiest to deploy in the domain where they should least be used (military), and hardest to trust in the domain where they are most desired (civilian).

This map does not provide answers. But it does something more fundamental: it helps you locate where the problems are.

If you are a corporate decision-maker, it tells you which quadrant the AI you are deploying actually sits in, and whether your governance tools are adequate.

If you are a policymaker, it shows you which quarter of the map your regulatory framework covers, and which three-quarters it misses.

If you are an AI practitioner, it explains why clients pay without hesitation in the Commercial Sandbox and won’t touch the Accountability Black Hole.

Existing national-level AI governance frameworks, from the EU AI Act to G20 declarations, are almost entirely crammed into the Commercial Sandbox, debating vigorously. This is like drafting an elaborate set of rules for managing hotel room upgrades—who qualifies for a suite, under what conditions, how to compensate if the upgrade goes wrong—and then declaring that the building’s fire safety problem is also solved. Covering the entire map with the rules of the safest quadrant is not governance. It is self-deception.

Let’s stop pretending we have drawn the boundaries for AI. Three-quarters of the map is still blank.