GenAI Is Cutting the People It Can’t Replace

As an org design consultant, I increasingly find myself not hiring junior consultants for projects. I used to bring people on naturally: desk research, initial analysis, framework building, first drafts. Now, a few LLMs working in tandem are more than enough. Faster, cheaper, and I don’t have to spend time mentoring anyone.

I know what this means, because I walked that exact path myself. I’m bypassing the very road that made me who I am.

And behind this lies a problem far more serious than “fewer entry-level jobs.” GenAI isn’t compressing low-end labor itself. It’s compressing the work that used to carry the talent development function. And organizations are clearing headcount from the bottom, where it’s cheapest. Efficiency gains and capability gaps are happening simultaneously.

I previously wrote a piece analyzing why layoffs always start with junior employees. Today’s article goes one layer deeper, into the structural problem beneath that pattern.

“Who gets cut” and “whose work gets replaced” are not the same thing

Statistically, GenAI adoption is hitting junior employees harder: more attrition, more early-career disruption. This easily creates a misreading impression: that junior work is more replaceable by GenAI and mid-level employees are safer.

I believe the opposite is true.

What GenAI is best at absorbing isn’t ground-level legwork. It’s the work that mid-level employees do in volume: research synthesis, document consolidation, preliminary analysis, standardized summaries, drafting, review. These “presentable work products,” the kind that used to require years of solid professional training to produce reliably, are precisely why mid-level roles exist. And they’re precisely what LLMs compress best.

Yet when organizations make cuts, juniors go first. Not because their tasks are more replaceable, but because cutting them is cheaper, easier, and draws the least resistance.

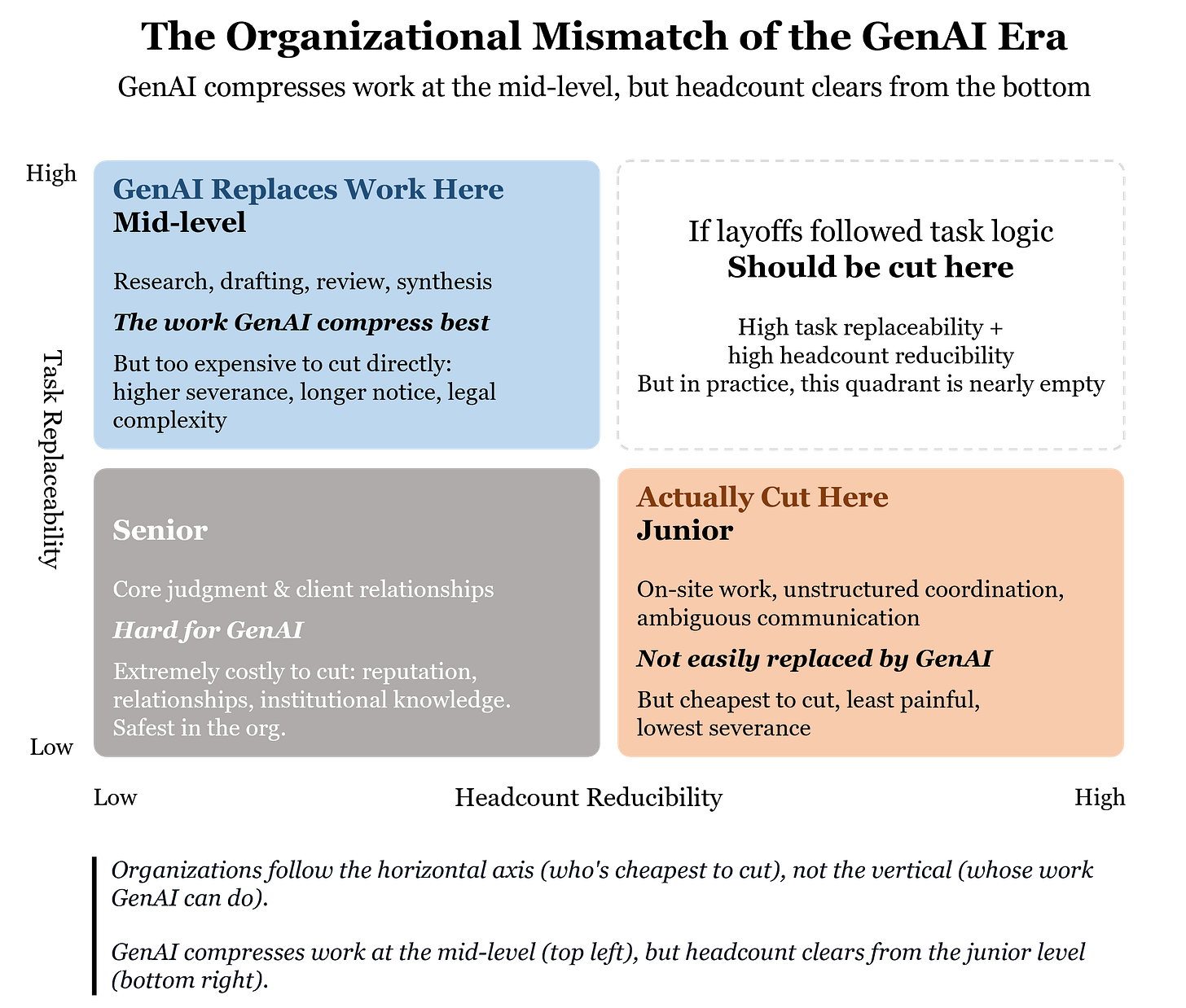

There’s a mismatch here that almost no one has named explicitly. I use two dimensions to illustrate it:

Task Replaceability: To what extent can GenAI handle the day-to-day work of this role?

Headcount Reducibility: How low is the cost and friction of eliminating someone at this level?

Overlay these two dimensions, and a counterintuitive picture emerges:

Mid-level employees sit in the “high task replaceability, low headcount reducibility” quadrant. GenAI can handle most of their daily output, but the economic cost of cutting them directly is steep: larger severance packages, longer notice periods, more complex legal processes. Letting go of a mid-level employee with 12 years of tenure and a $7,000 monthly salary might cost $70,000 in severance (N+3). That same budget can eliminate ten junior employees with 2 years of tenure and $2,000 monthly salaries. When the CFO is staring at a fixed severance budget and a headcount reduction target, the math makes the decision.

Junior employees sit in the “low-to-medium task replaceability, high headcount reducibility” quadrant. Much of what they do (on-site coordination, unstructured communication, handling ambiguous information) isn’t that easy for GenAI to replace. But cutting them is the cheapest and least painful option. They’re not cut because they’re underperforming. They’re cut because they’re affordable.

Organizations follow the vertical axis (who’s cheapest to cut), not the horizontal axis (whose work GenAI can actually do). The work GenAI actually compresses sits at the mid-level, but the cost clearing happens at the bottom of the pyramid.

This mismatch is dangerous because it creates a false narrative: it looks like organizations are using GenAI to replace “low-end work,” when in reality they’re reducing headcount in the cheapest way possible. After the juniors leave, their work floats up to mid-level. Mid-level employees are now integrating AI output while picking up basic tasks they haven’t touched in years. Those who can’t sustain it leave on their own. Voluntary resignation means zero severance. The organization cuts from the bottom and indirectly forces out the middle. One round of severance payments, two layers of people gone. In the short term, it looks like a smart deal. But in the process, something far more critical is silently disappearing.

The output remains. The development doesn’t.

Many entry-level roles used to carry a dual function. On one hand, they were part of the delivery process: immediate output. On the other, they were mechanisms for training, observation, and selection: talent development.

When a junior employee first joins an organization, most of what they do isn’t glamorous. But whether someone has structural thinking, problem awareness, resilience under pressure, the ability to turn ambiguous problems into clear outputs, none of that shows up in a one-hour interview. It’s built layer by layer through foundational work, and it’s observed the same way.

When GenAI takes over the “immediate output” function of these tasks, it simultaneously strips away their “development” function. But the organization’s dashboards only register the former: efficiency up. The latter, the quiet erosion of the talent pipeline, never appears on any report.

This is what I call false prosperity. Faster delivery, lower labor costs, better margins. But the prosperity is borrowed. Organizations aren’t just saving on low-value labor costs. They’re also eliminating the cost that used to generate the next generation of professional talent. You won’t immediately see “top talent disappearing.” You’ll see reports coming out on time, deliverables arriving even faster. But three to five years later, you’ll notice that the people capable of high-level judgment haven’t emerged at the rate they used to.

This is, in essence, a hidden liability. And like all hidden liabilities, its most dangerous feature is that everything looks fine right up until it doesn’t.

“But can’t AI also help juniors learn faster?”

This is the most common rebuttal, and the most comforting optimistic narrative. But it conflates two things.

AI can accelerate learning. It cannot create learning environments.

A junior employee who uses AI to produce a polished report hasn’t necessarily understood why it’s written that way. What they’ve skipped is precisely the most valuable part of the training: groping for structure in ambiguity, making trade-offs under pressure, getting sent back six times by a senior before finally grasping what “good enough” actually means. Developing judgment isn’t an information acquisition problem. It’s a process of repeated trial, error, and correction. That process requires real delivery environments, with real pressure, real consequences, and real feedback.

Courses can be accelerated with AI. But judgment isn’t taught in courses. It’s forged in real work.

I’m not saying all entry-level work must be preserved, nor am I denying that AI can improve learning efficiency. The real problem is that organizations have no incentive to deliberately rebuild the judgment-formation mechanisms that were once embedded in real delivery work. AI can increase the speed of learning. But what’s actually missing isn’t speed. It’s the environment.

The market won’t self-correct

Many people enjoy the easy narrative: AI frees humans from repetitive labor so we can all do higher-order, more creative work.

Freed to do what, exactly? Who bears the cost of training? Who absorbs the inefficiency of people still learning? Who pays the tuition for the next generation’s talent pipeline?

Companies won’t do this voluntarily. Companies naturally optimize for lower cost, higher efficiency, and more immediate returns. You can’t acknowledge that GenAI delivers significant efficiency gains while also expecting most companies to voluntarily maintain a low-efficiency, high-development talent pathway.

There’s also a classic collective action problem at work: every company has an incentive to free-ride on others’ development investment. If Company A spends resources developing junior employees, Company B can simply poach the mid-level talent that Company A produced. As GenAI makes the “skip development, hire ready-made” strategy increasingly viable, fewer and fewer companies will choose to bear the cost of developing talent.

This isn’t a moral failing of any single company. It’s a structural market failure.

The global policy toolkit isn’t addressing the right problem

Governments haven’t been idle on AI’s workforce impact. Training levies, tax credits, mandatory AI literacy programs. There’s no shortage of initiatives. But they all share a fundamental blind spot: they all assume “development” is an activity that can be separated from work, independently subsidized, and independently assessed.

That assumption is disconnected from reality. The reason a junior employee can become a reliable mid-level professional in three to five years isn’t because someone put them through a training program. It’s because they did massive amounts of real work on real projects. Development doesn’t happen alongside work. Development is the work itself.

Once AI takes over the tasks that carried the development function, you can’t compensate by building a separate “training program” on the side. No matter how much you subsidize, if junior employees don’t get the chance to do real things in real delivery environments, development simply won’t happen.

The question isn’t “who pays for training.” It’s “who preserves the environment where development happens.”

The real solution is straightforward and right in front of us

If development is embedded in work, then protecting the development function means preserving the space within work where development can occur.

To put it bluntly: layoffs are fine, but organizations must deliberately retain opportunities for a portion of their people to do real work on real projects, even when AI is perfectly capable of replacing them. This isn’t about efficiency. It’s about ensuring that when models fail, when tasks exceed model boundaries, or when the regulatory environment shifts, the organization still has people with the judgment to take over.

This logic isn’t new. The defense industry has been doing it for decades.

Fighter jet manufacturers don’t disband their design teams during years without new contracts. Shipyards take on civilian orders between naval commissions. Not because civilian products are more profitable, but because once you scatter the team and shut down the line, the cost of rehiring, retraining, and rebuilding cohesion for the next contract far exceeds the cost of keeping them on through the gap. What they practice is capacity preservation: maintaining the capability base so it’s ready when needed.

Defense and knowledge work aren’t the same industry, of course. But they face the same organizational choice: whether to sacrifice, in the name of short-term efficiency, a capability that looks redundant in peacetime but becomes decisive under stress.

Knowledge-intensive organizations now face that exact choice. You can let AI handle most delivery. But you need to retain enough people doing real work on real projects. On-the-job training, not classroom courses, not simulations, but sustained practice under real delivery pressure. These people and the skills they develop aren’t “redundancy.” They’re your capability reserve.

This is a risk management decision, not a charity decision.

A company’s Plan B is also a nation’s bottom line

From a company’s perspective, capacity preservation is Plan B. AI isn’t infallible. Models get updated and interrupted, networks go down, policies change. An organization that outsources all cognitive labor to AI without retaining sufficient internal human capability is as fragile as a manufacturer that depends on a single supplier for every component. Maintaining a capability reserve isn’t a waste of resources. It’s an insurance policy.

But if this choice is left entirely to individual companies, the result will mirror the collective action problem described above: some companies will always choose not to retain, not to develop, not to invest, and then scramble to poach talent from the market when things go wrong. When everyone thinks this way, there’s no one left to poach.

So ultimately, this is a national-level problem.

Just as the defense sector can’t hand all manufacturing capacity to peacetime’s most efficient bidder, the knowledge economy can’t hand all cognitive capacity to the AI era’s most efficient solution. By the time you realize the capacity isn’t in your hands, it’s already too late. The ability to develop talent isn’t just a corporate pipeline. It’s an economy’s industrial base.

The specific policy tools can be designed over time. Perhaps some form of mandatory training retention ratios. Perhaps changes to how on-the-job training is treated for tax purposes. Perhaps industry-level requirements modeled on aviation’s mandatory manual flying hours, a minimum threshold for human-performed work. These need deeper discussion.

But the direction should be clear: not building a separate training system outside of work, but preserving the space for human participation in real work, within work itself.

The moment an organization stops treating real work as a development mechanism by design, it begins, behind the appearance of rising efficiency, to overdraw on its future supply of judgment.

An organization unwilling to develop people may look more efficient in the short term. A society unable to sustain the development of people will inevitably pay a far greater price.