Nobody’s Driving. Nobody’s Accountable.

What an OD person sees from the backseat of a robotaxi

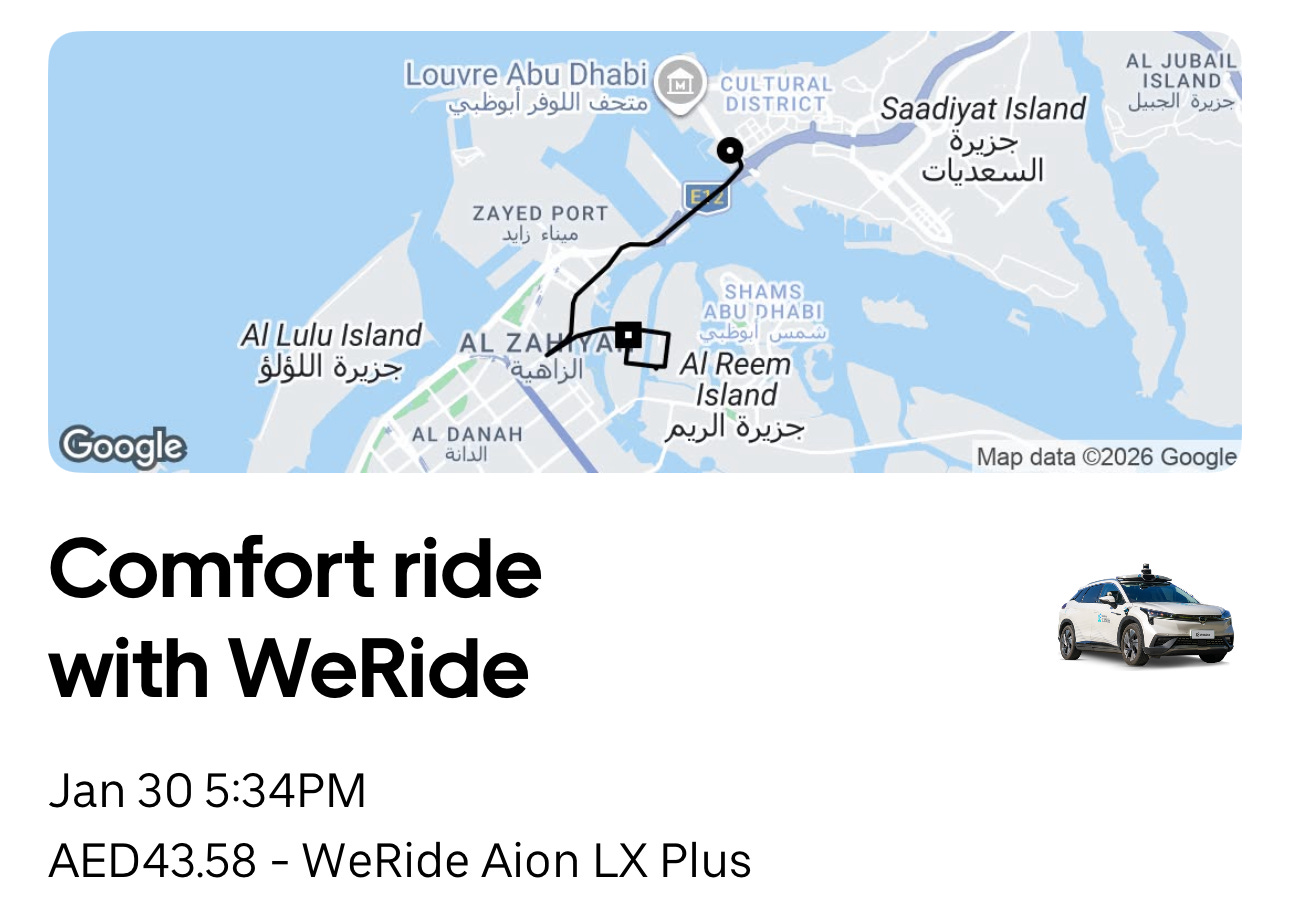

I had just finished visiting the Louvre Abu Dhabi and called an Uber to The Galleria.

The app gave me an option I had never seen before: Autonomous with Specialist Saif.

First time riding a WeRide. Exciting. But people who do Organization Development for a living have a chronic condition: the moment I booked that ride, my brain started running scenarios. Who is making decisions in this service chain? If something goes wrong, who owns it? How is accountability allocated across the different parties?

Then came the second surprise.

I walked up to the car and noticed the “unlock door” button on the app was greyed out. I couldn’t tap it. Before I could figure out why, the door opened from the inside. A man was sitting in the driver’s seat.

This was “Specialist” Saif.

The car started moving. The steering wheel turned on its own. Saif’s hands rested on his knees. He was not driving. But he was sitting in the driver’s seat.

As an OD person, my questions had begun.

What exactly are you?

Who is Saif?

Uber calls this role “Vehicle Specialist.”

Not “driver.” Call him a driver and the whole “autonomous” narrative collapses.

Not “safety monitor.” That would be admitting the system is not reliable enough.

Not “override operator.” Even worse. That would tell every passenger the car could malfunction at any moment.

So they called him a “specialist.” A word that sounds important but, if you think about it, commits to absolutely nothing.

Anyone who has worked in OD knows that the name an organization gives a role is never accidental. Names define authority boundaries. Names define accountability. Names define what a person actually is within the organization.

“Vehicle Specialist” accomplishes one thing with surgical precision: it makes Saif simultaneously present and absent.

He is in the car, so you feel someone has your back. He is not a driver, so if something goes wrong, he was not driving. He is a “specialist.” But a specialist in what? With what authority? Under what obligations? Nobody says.

This might be the finest piece of corporate wordsmithing of 2026.

The police car in the traffic jam

I always stumble into interesting situations when I travel. This trip was no exception.

We were stuck in traffic. A police car came up from behind, sirens on, trying to push through the queue. One by one, the cars in front of it began pulling aside, as if someone were directing them. But no one was.

A thought hit me: if Saif were not in this car, would it have moved?

Think about how a human decides to yield. The siren. The markings on the car. The uniformed officer behind the wheel. The flashing lights in the rearview mirror. The cars around you already pulling over. You synthesize all of this in a second or two, make a judgment call, and move.

And here is the thing: even with all that information, you cannot be one hundred percent certain it is a real police car. You simply judge the probability to be high enough and act. This is human decision-making under uncertainty.

Can autonomous vehicles detect police lights? Yes. Waymo says its cars can recognize police uniforms and interpret hand signals. These are technical problems, and technical problems can be solved.

But twenty cars yielding in unison during a traffic jam? That is not a technical problem. That is social intelligence. Every driver is reading the intentions of the cars around them and coordinating without a single explicit instruction. No traffic signal. No dispatch center. No algorithm directing the choreography.

Industry experts keep telling us that the pace of AI advancement far exceeds our imagination. Well, this is the perfect test. I would genuinely love to see a fully autonomous vehicle navigate a congested road and yield to a police car weaving through from behind.

Our car did yield, eventually. But here is what I cannot tell you: whether Saif took over the wheel or the system figured it out on its own. I was watching the police car, not Saif’s hands. From the backseat, there was no way to tell who was in control at that moment.

And that, if you think about it, is the point. If a passenger sitting right there cannot tell who is making the decisions, what chance does anyone have of assigning accountability after the fact?

If that delay had compromised a police operation, if someone had died because of it, who would be accountable?

Not even a tip

The ride ended. The rating screen appeared.

No tipping option.

In a regular Uber, you can tip the driver. The underlying basis of tipping is recognizing that a human being participated as a meaningful link in the service chain.

Saif was physically present for the entire ride. He opened my door. The app told me: no tip necessary.

What does this tell us?

One reading: Uber does not consider what Saif does a service. He is not a service provider. He is a component of the vehicle, same category as the seatbelt or the airbag. A human being, reclassified as a part.

A deeper reading: Uber cannot allow you to tip him. The moment you tip, you have confirmed with real money that a human was involved in your ride. If a human was involved, it is not fully autonomous. Removing the tip option was not an oversight. It was a design choice. It does not protect your wallet. It protects the classification of the trip.

OD people see this immediately. The organization is using the design of its payment system to erase the existence of a role. This follows the same logic as the naming. The name makes him ambiguous. The payment makes him invisible.

The accountability vacuum

Now let us connect the dots.

Suppose that day in traffic, I had been in a fully driverless WeRide. The police car could not get through. The delay cost someone their life.

Who is accountable?

I will ask around on your behalf:

WeRide says: Our vehicles have been validated in over 30 cities worldwide. The technology meets all standards. Uber says: We are a platform, not a manufacturer. The passenger selected autonomous mode. Abu Dhabi’s Integrated Transport Center says: We issued the operating permit through proper procedures. The insurer says: Please first determine whether the cause was a technical fault or an external factor.

Every party has a reasonable answer. None of them are lying. But accountability has been sliced into so many pieces that each slice is thin enough to ignore.

Accountability did not disappear. It evaporated.

This is the concept I introduced in my previous article, The AI Mole: the accountability vacuum. When AI gains decision-making autonomy but the organization has not redefined who is accountable for AI’s decisions, a vacuum forms. And everyone has a valid reason to say “not me.”

The real problem with 99%

Here is where most people get the analysis wrong.

The common assumption is that autonomous driving, and AI more broadly, is a technology problem waiting for a technology solution. Get the accuracy high enough, and the objections will go away. Reach 99%, and you are almost there. Reach 99.99%, and surely no one can complain.

But that is not how decision makers actually think.

I have spent years watching executives evaluate process automation, AI-driven workflows, and algorithmic decision-making tools. What I have observed is this: accuracy is never the real objection. Accountability is.

Consider a manual process run by a human team. Say it achieves 90% accuracy. Not great, by any measure. But organizations accept it every day. Why? Because the accountability chain is intact. There is someone accountable. That person has a name, a title, a manager, and a disciplinary process. If something goes wrong, you know exactly where to go. You may not be happy with 90%, but you can live with it, because someone owns the outcome.

Now introduce an AI system that achieves 99% accuracy. On paper, this is a massive improvement. In practice, decision makers hesitate. Not because 99% is not good enough. Because nobody can clearly answer what happens with the remaining 1%. Who owns that 1%? The developer? The vendor? The team that approved the deployment? The person who was supposed to be monitoring the output?

When the accountability chain is clear, organizations tolerate surprisingly high error rates. When the accountability chain is broken, even spectacular accuracy is not enough.

This is why decision makers, despite what they say publicly, benchmark AI at 100%. Not because they genuinely believe machines should be perfect. But because only 100% eliminates the need to answer the question “who is accountable when it fails?” Anything less than 100% requires an accountability framework that nobody has built.

And the AI industry knows this. That is why so many vendors sell the dream of 100% accuracy, or carefully avoid mentioning error rates altogether. Because the moment you admit to 1% failure, the next question is “who pays for that 1%?” And nobody wants to answer it.

This is not limited to autonomous driving. It applies to every AI system that makes or influences decisions: automated approvals, AI-driven hiring screens, algorithmic credit scoring, robotic process automation. The pattern is identical. The technology works. The accountability does not.

So what do organizations do when they cannot achieve 100% and cannot define who owns the gap?

They build a fuse.

The accountability fuse

Now look at Saif again.

Uber’s public narrative is that the specialist is a transitional role. Once the technology matures, the specialist goes away. In fact, the fully driverless version is already running on Yas Island. The Saifs of the world are being “phased out.”

I disagree. This role will not disappear. Because it does not exist due to technological immaturity. It exists because technology can never absorb accountability. And the 0.01% that falls outside the algorithm’s reach (the police car in a traffic jam, a funeral procession, a child darting out between parked cars, a construction worker waving you through a red light) requires human judgment that no accuracy metric can replace.

More critically, it requires someone who, when things go wrong, can be pointed at and asked “why didn’t you take over?”

Think carefully about Saif’s position:

If the car drives perfectly, he is redundant. His presence proves the technology works, which means his job should be eliminated.

If the car fails and he intervenes in time, he saves the day. But he also proves the technology was not ready. This directly contradicts his employer’s entire valuation story.

If the car fails and he does not intervene in time, he absorbs all liability. He becomes the next Rafaela Vasquez. In 2018, an Uber autonomous test vehicle struck and killed a pedestrian in Arizona. Uber was cleared of all criminal liability. The safety operator sitting in the driver’s seat was charged with negligent homicide. Scholars call this the “moral crumple zone.” When the system fails, the technology walks away intact. The human stays behind and takes the fall.

Three paths. None of them lead anywhere good.

After years of doing OD, I have seen this pattern in traditional organizations more times than I can count. I have a name for it: the accountability fuse.

A fuse exists to blow. It is engineered into the circuit specifically to absorb the overload and protect the expensive system behind it. When it blows, you do not repair it. You throw it away and put in a new one.

When strategy succeeds, credit flows upward. When strategy fails, the fuse blows. Middle managers are the classic accountability fuse. Saif is the autonomous driving version.

The difference: traditional accountability fuses at least get a title and a year-end bonus.

Saif does not even get a tip.

This is not a technology problem

I am not here to judge whether autonomous driving is good or bad. Honestly, the ride was smooth. Smoother than some of the ride-hailing services I have taken in Shanghai.

What I care about is something else.

When an organization decides to hand decision-making authority to AI but refuses to clearly state who has final authority at the critical moment, it does not leave a blank. It creates a role to fill that blank.

It gives the role an ambiguous title. It uses the payment system to erase the role’s existence. It uses the phrase “transitional period” to imply that all of this is temporary. It writes “Vehicle Specialist” in every press release but never defines what authority or obligation that entails.

This is not a failure to think things through. This is having thought things through all too well.

This is organizational design.

Back to the beginning

That day, Saif opened the door from the inside. I never had to press “unlock.” But Yas Island has already gone fully driverless. Next time, there will be no Saif. Just the button.

You press it. It greys out.

That greyed-out button did not just lock you in. It locked accountability out.

This is the second piece in the AI Decision Rights series. First piece: