What OpenClaw Actually Can and Can’t Replace

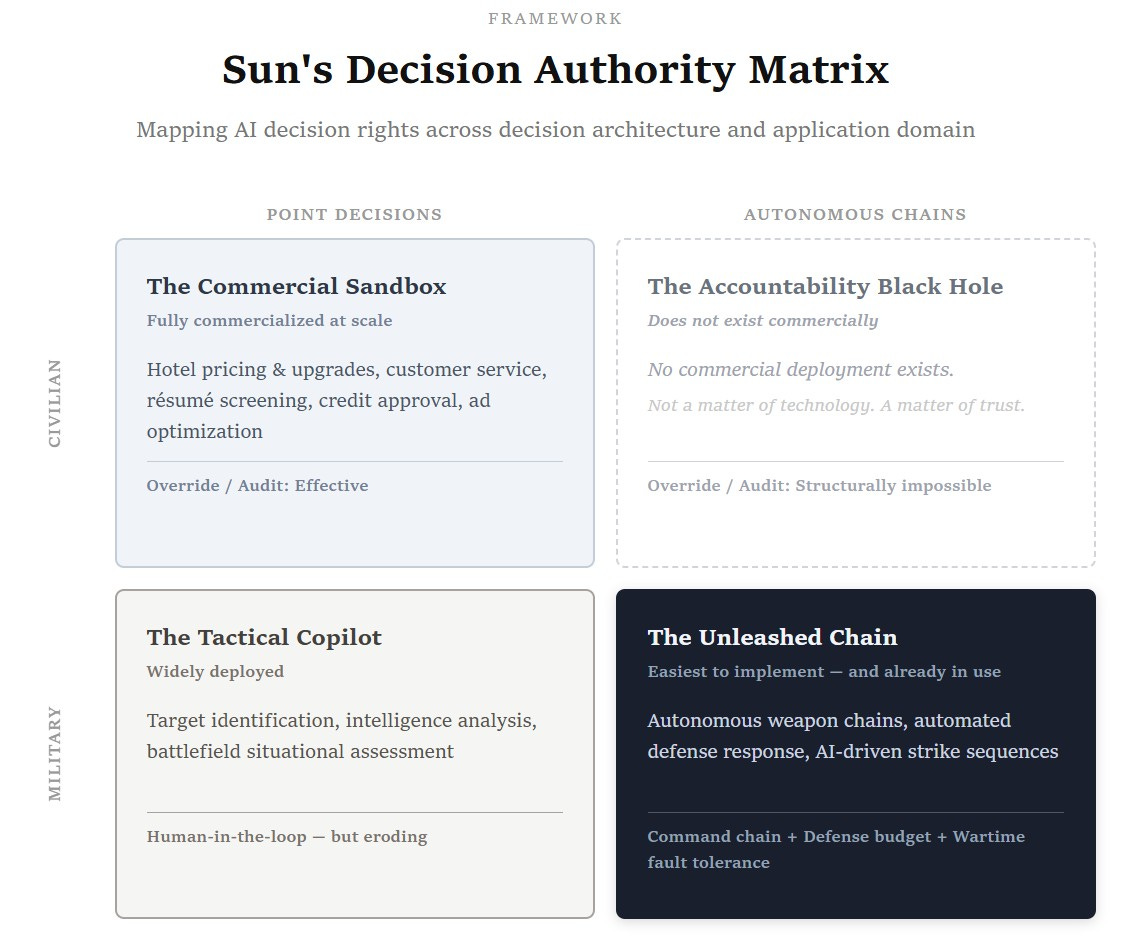

Sun’s Decision Authority Matrix — OD Behind the Curtain

OpenClaw (the agentic AI tool that operates your computer on your behalf) has sparked a wave of enthusiasm among knowledge workers, first in Western markets last month and now surging across China. The pitch is seductive: hand your routine tasks to an AI agent, free yourself for higher-value work.

But this expectation is built on a flawed assumption: that your routine tasks are simple.

They are not.

Your “simple” work was never simple

Consider the most mundane task in any corporate calendar: scheduling a cross-departmental meeting.

In OpenClaw’s logic, scheduling means scanning calendars, finding overlapping availability, and sending a link. Anyone who has actually coordinated across business units knows that scheduling is not time management. It is political negotiation.

You need 12 stakeholders in a room. If the head of the largest business unit can’t attend, the meeting is pointless. Cancel it. If he attends but the head of the second-largest unit is unavailable, you need her deputy C, because C is the only person with enough seniority and delegated authority to commit on the spot. If both are unavailable, the meeting collapses again, because you cannot allow the first executive to feel that he showed up personally while the other unit didn’t even send someone who could speak with authority.

This entire judgment chain fires in your head instantaneously. If you tried to encode every stakeholder’s temperament, history, informal authority lines, and political sensitivities into rules that OpenClaw could follow, you would spend more time writing the rules than scheduling ten meetings yourself.

And when OpenClaw gets one branch wrong and offends the wrong person, who takes the blame?

You do.

If you scheduled the meeting yourself and it went sideways, you own it. But once OpenClaw is in the loop, it produces a mechanically “optimal” solution that satisfies no one, and you are left standing in front of the room absorbing the impact. Academia has a term for this: the Moral Crumple Zone — the system fails, the algorithm disappears, and the human operator absorbs the full force of accountability.

First, understand what OpenClaw actually is

There is a widespread confusion among OpenClaw enthusiasts: they conflate the capabilities of AI models with the capabilities of OpenClaw.

Writing reports, analyzing data, generating summaries — AI models (GPT, Claude, Gemini) can genuinely accelerate these tasks. But they do this on their own. You do not need OpenClaw to use AI for writing or analysis.

OpenClaw’s actual value proposition is not AI intelligence. It is computer operation: automated clicking, form-filling, moving data between applications, orchestrating tasks across devices. It is a connectivity and operation layer tool.

To be fair, OpenClaw handles ambiguous goals better than traditional RPA. It does not rely entirely on predefined rules and stable interfaces. But its core capability remains operational orchestration, not contextual judgment. In most real enterprise scenarios, its marginal improvement over mature RPA solutions is limited.

The work that looks “low-value” — scheduling, cross-departmental coordination, stakeholder management — is built entirely on complex decision trees accumulated through years of human experience. A task appearing simple does not mean the judgment behind it is shallow. The more routine the coordination work, the more it depends on tacit knowledge that cannot be codified.

Back to the flawed expectation: you thought OpenClaw would handle your “low-value” routine tasks so you could focus on “high-value” analysis. The reality is that AI models (not OpenClaw) can already help with your analysis. And the routine tasks you wanted to offload are precisely where OpenClaw cannot reach.

A calculation for business leaders

Many executives get excited the moment they hear “AI automation.” That is understandable. But two layers of expectation need to be examined separately.

Layer one: fully autonomous end-to-end operations — AI running the entire chain from sales forecasting to procurement to logistics to finance, with no human intervention.

This is not achievable in civilian commercial environments. Not because the technology is insufficient, but because the accountability structure does not permit it.

Sun’s Decision Authority Matrix defines this as a structural deadlock. An autonomous AI chain that does not place a human gate before irreversible commitment nodes (contracts signed, warehouse slots released, penalties paid) cannot assign accountability when things go wrong. But the moment you add a gate, the chain is no longer autonomous — it collapses back into segmented human approval. No gate means no accountability. A gate means no autonomy. This deadlock cannot be solved by spending more money.

Truly autonomous chains currently operate only in military contexts, for three specific reasons:

Chain of command eliminates accountability diffusion;

Defense budgets absorb extreme error costs;

Wartime environments inherently accept irreversible consequences.

Commercial enterprises possess none of these three conditions.

Layer two: fine, full autonomy is off the table. But surely OpenClaw can replace enough workers on individual tasks to cut headcount? That alone would justify the investment.

The analysis above already answers this. The operational orchestration work that OpenClaw can handle is already covered by mature RPA and AI tool combinations, with limited marginal improvement. And the employees you want to cut are doing exactly the kind of tacit-judgment coordination work analyzed above: who must attend, who can be absent, what can be said, what cannot wait. None of these judgments can be delegated to OpenClaw.

The people you cut may be the people you most need to keep.

Conclusion

Our seemingly mundane daily work is stitched together from countless small, deeply human judgments. AI models can write your reports, run your analyses, and search for information faster than you can — but none of that requires OpenClaw. And the routine operations OpenClaw promises to handle for you? Its marginal improvement is far smaller than the demo videos suggest.

It is precisely because this work cannot be automated that it carries real value. Your tacit judgment is your true irreplaceability. Do not let the hype around a tool devalue the most valuable thing you bring to work. You thought you were installing a tireless digital worker called OpenClaw. But stripped of the tacit knowledge that lives in your head and cannot be turned into code, it quickly becomes something else entirely: OpenFlaw.

For the full framework on where AI decision authority can and cannot be exercised: Sun’s Decision Authority Matrix